Earth AI: Ask Google Earth

Technical Lead & Computational Design Engineer

2024–Present · Google

Designed and built Google's first geospatial reasoning agent, connecting satellite imagery, weather, and population data so scientists and policymakers can query planetary-scale datasets in natural language. I developed across stacks, focusing on the agent architecture that enables designed HCI patterns for user trust.

The Problem: Domain experts can't query planetary data without GIS expertise.

Domain experts in public health and climate science need to analyze planetary-scale datasets (satellite imagery, weather, population), but traditional GIS workflows are fragmented. Furthermore, standard LLMs have no inherent understanding of geospatial data: satellite imagery, coordinate systems, and spatial relationships are outside their training distribution, making their outputs unreliable for domain-critical decisions.

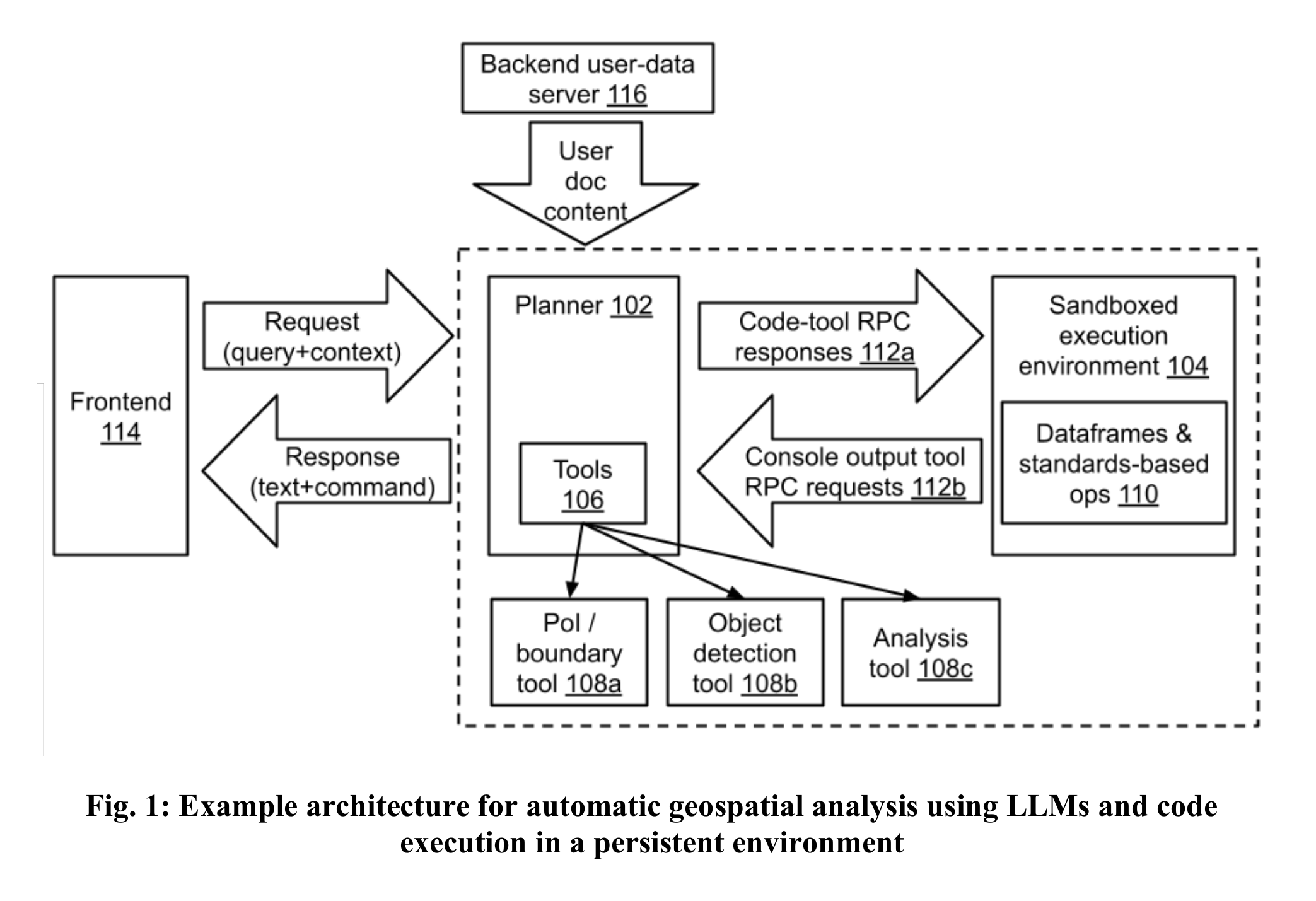

The Architecture: A planner-executor loop with grounded outputs.

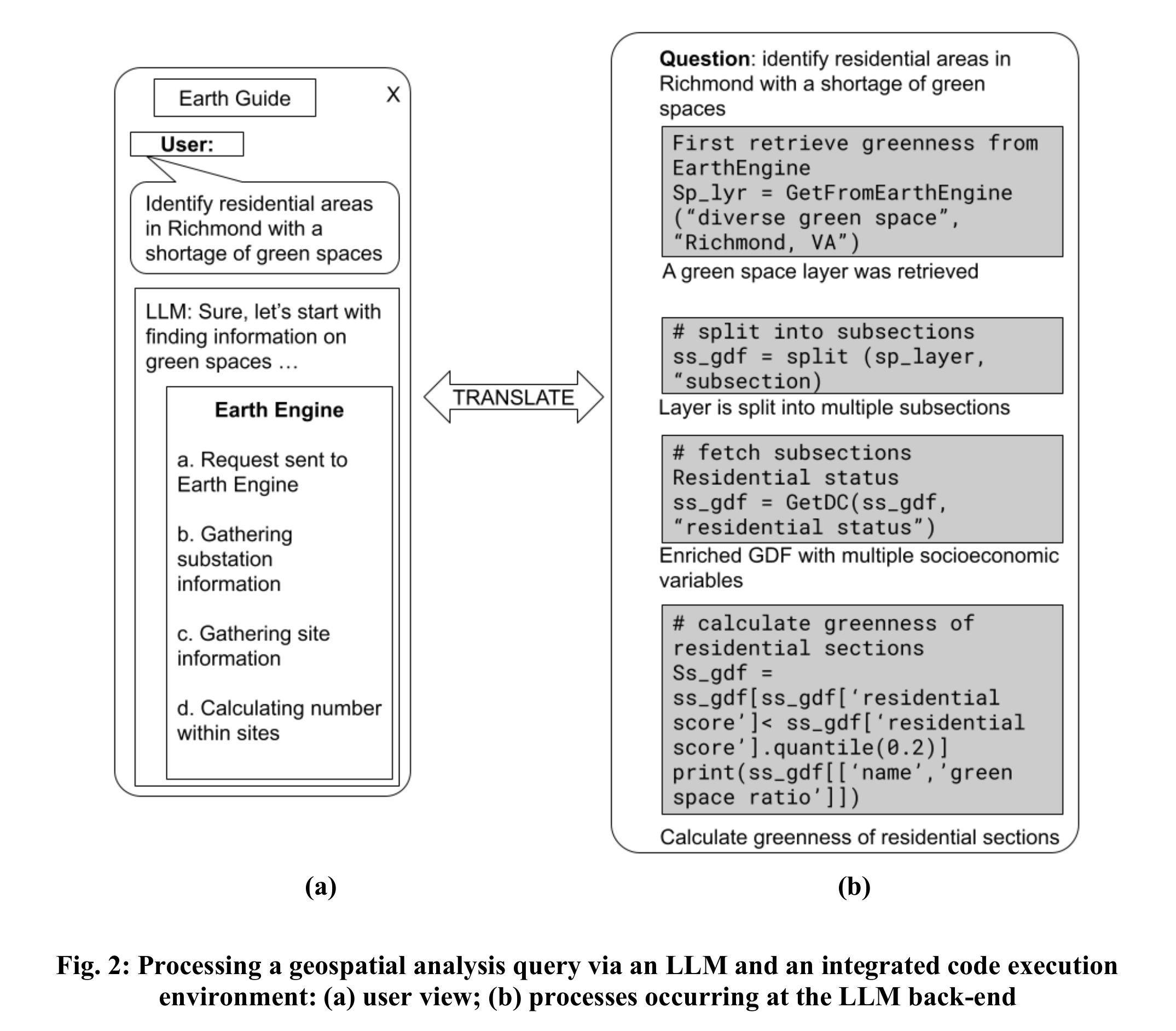

I defined the tools critical in GIS and evaluated for domain workflow compatibility. I designed the planner-executor loop utilizing Gemini to decompose multi-step spatial queries into actionable tool selections without hallucinating. I also built a persistent, sandboxed code execution environment where the planner communicates via RPC requests, ensuring stateful execution across follow-up queries and preventing the LLM from accessing raw data directly.

System architecture — planner-executor loop with sandboxed execution.

User-facing vs backend — separation of concerns for verifiable outputs.

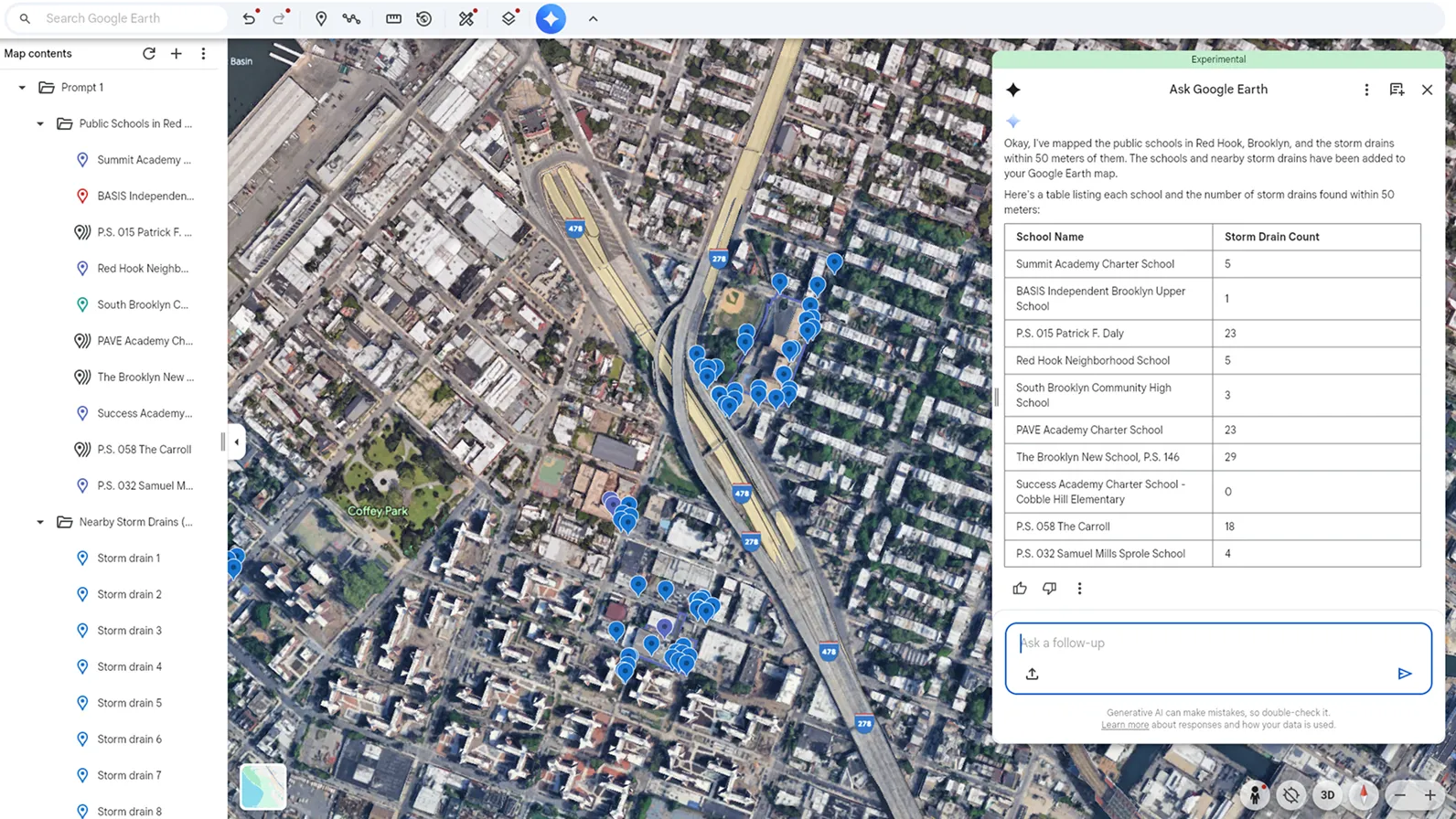

The Interaction: Step-by-Step Transparency and Traceable Data.

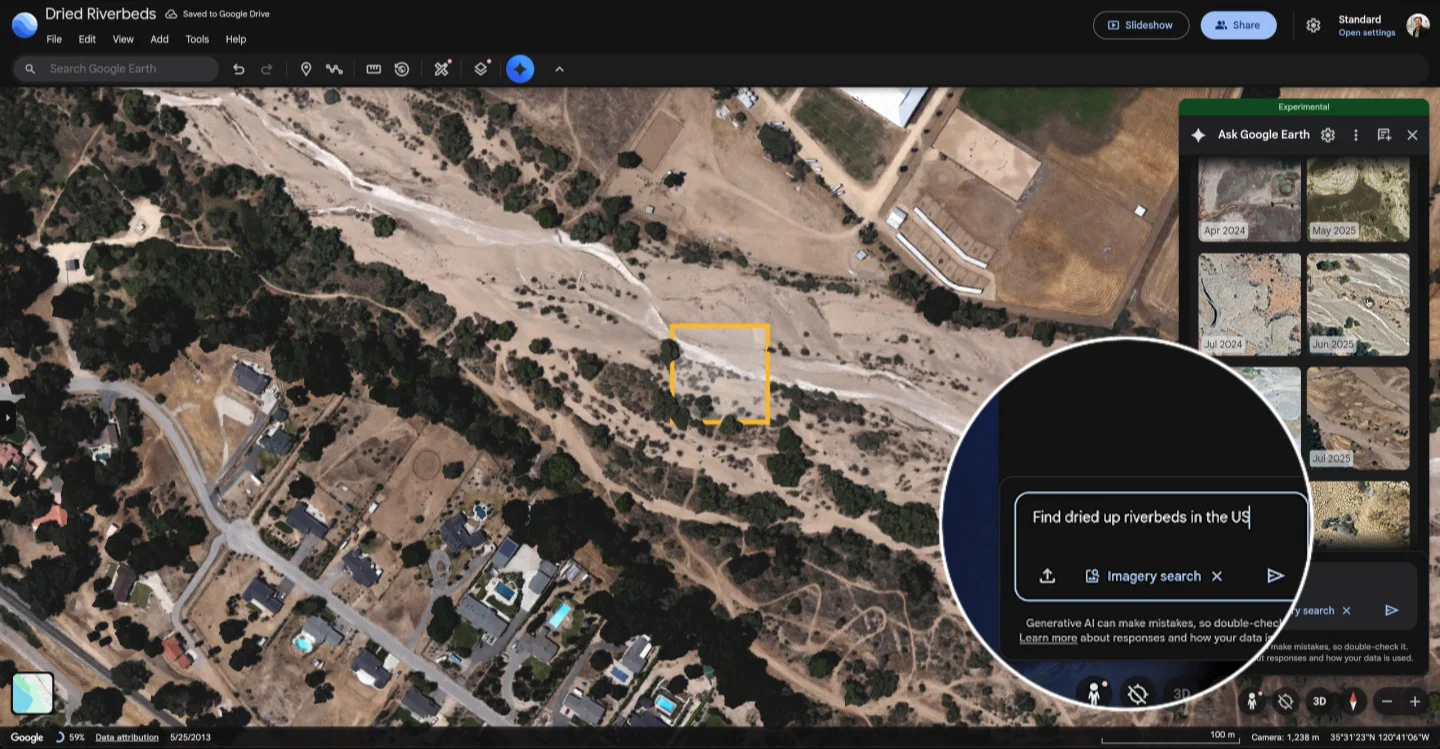

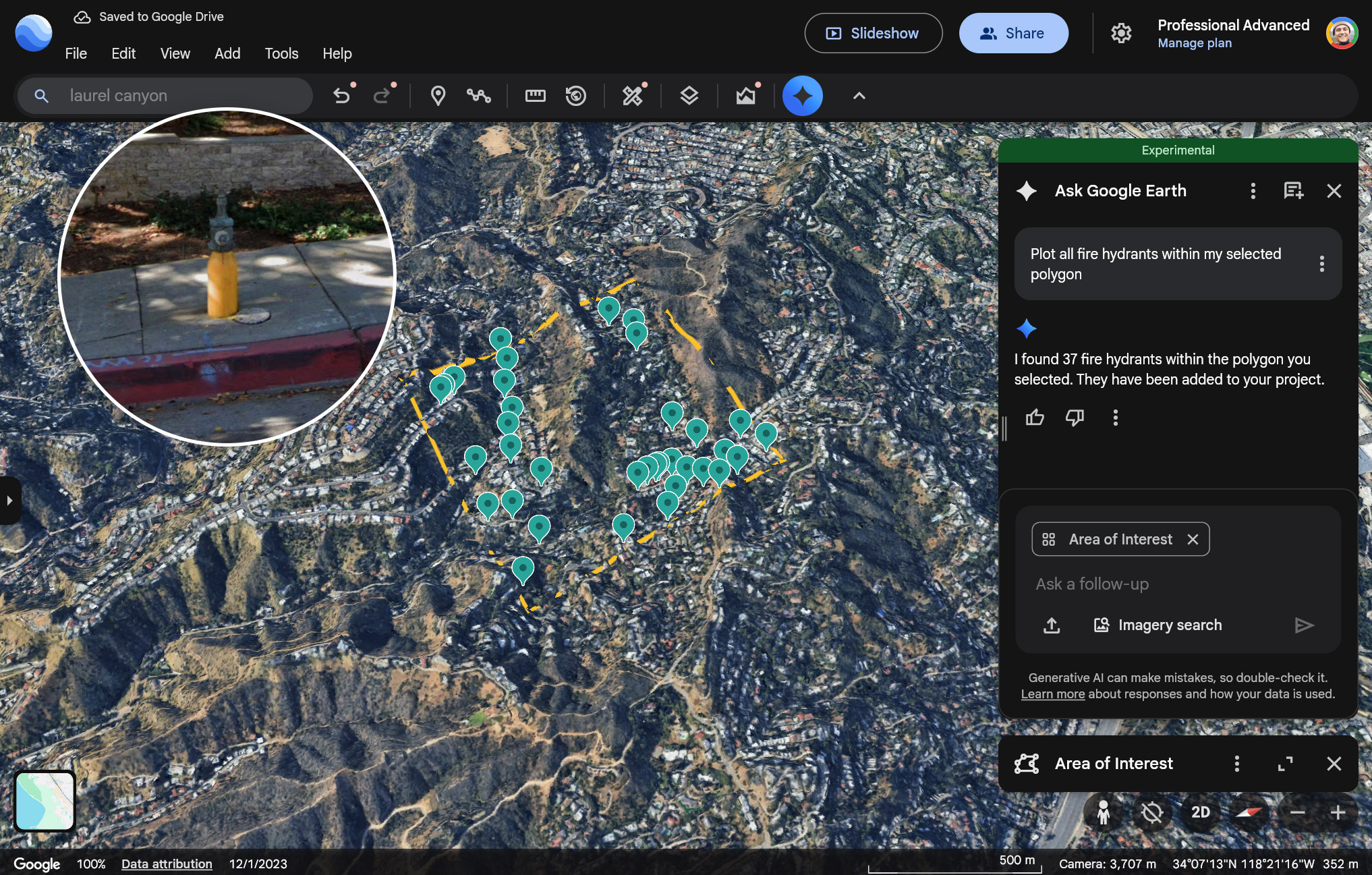

Designed the core experience for progressive disclosure and verifiable data provenance. Built features like Overhead Imagery Search and Infrastructure Insights so users can inspect the agent's logic, verify claims directly against source satellite and Street View imagery, and steer constraints in real-time.

Overhead Imagery Search — detecting environmental patterns via natural language.

Infrastructure Insights — identifying assets within a user-defined polygon.

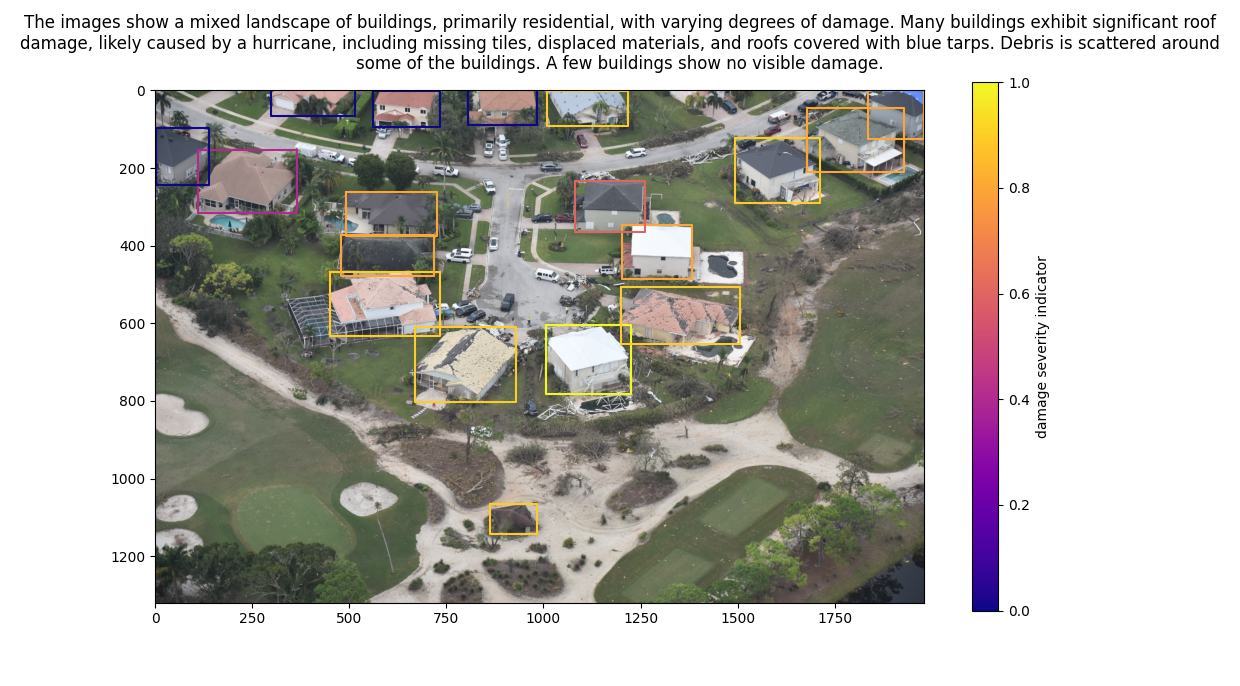

The Impact: Supporting infrastructure development, crisis response, and public health research.

The agent is actively deployed for high-stakes decision-making. By moving geospatial analysis from weeks-long workflows to real-time queries, the system supports humanitarian aid delivery for GiveDirectly, environmental health research at Boston Children's Hospital, and disease monitoring for the WHO. Beyond direct deployments, approximately 90% of the world's sustainable infrastructure planning, from EV charging networks to solar farm siting, relies on Google Earth data, and this agent makes that analysis accessible without GIS expertise.

From Weeks to Minutes: Planning EV Infrastructure

Finding the Perfect Solar Farm Locations

Patent · 2025

Pending Patent: Multimodal Satellite Imagery Reasoning